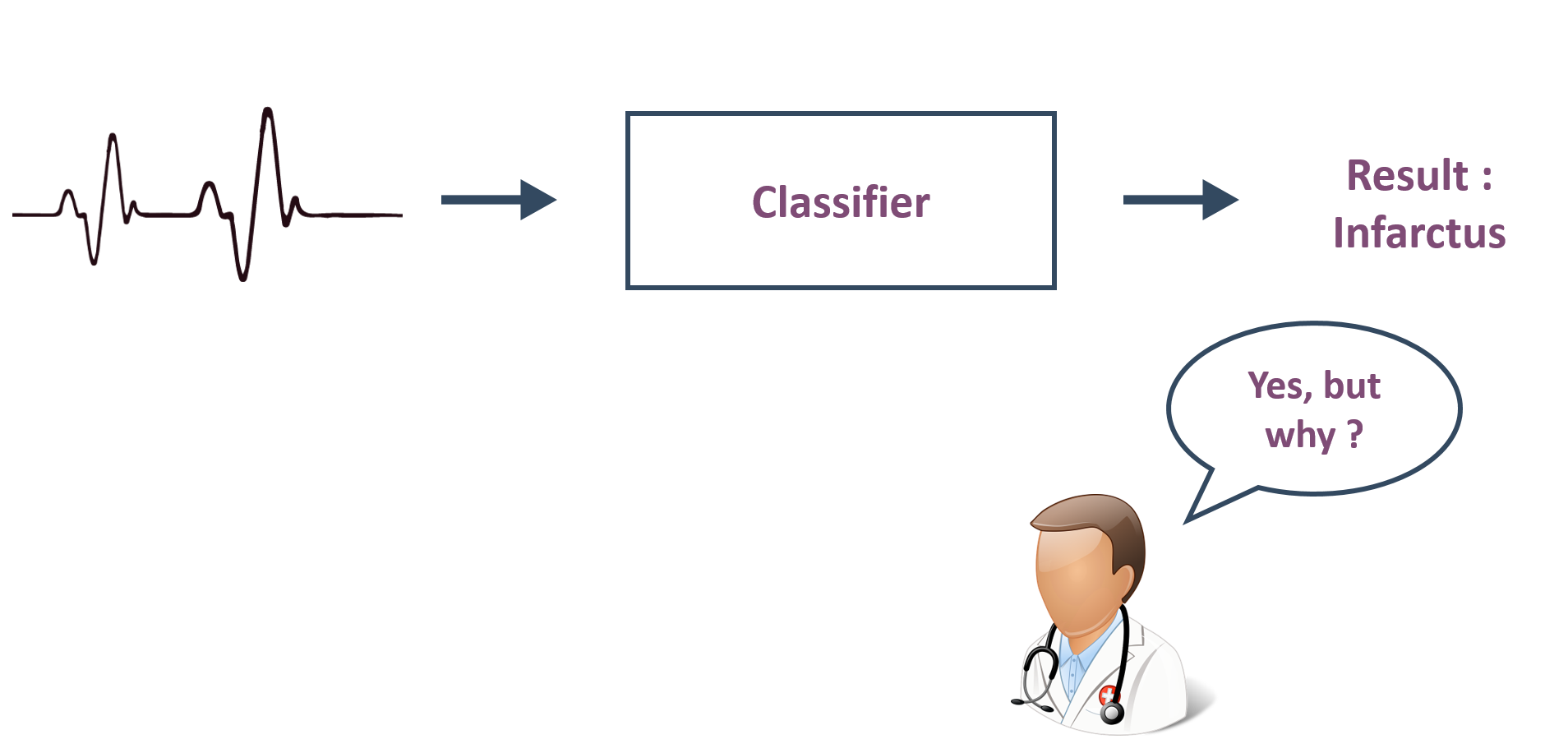

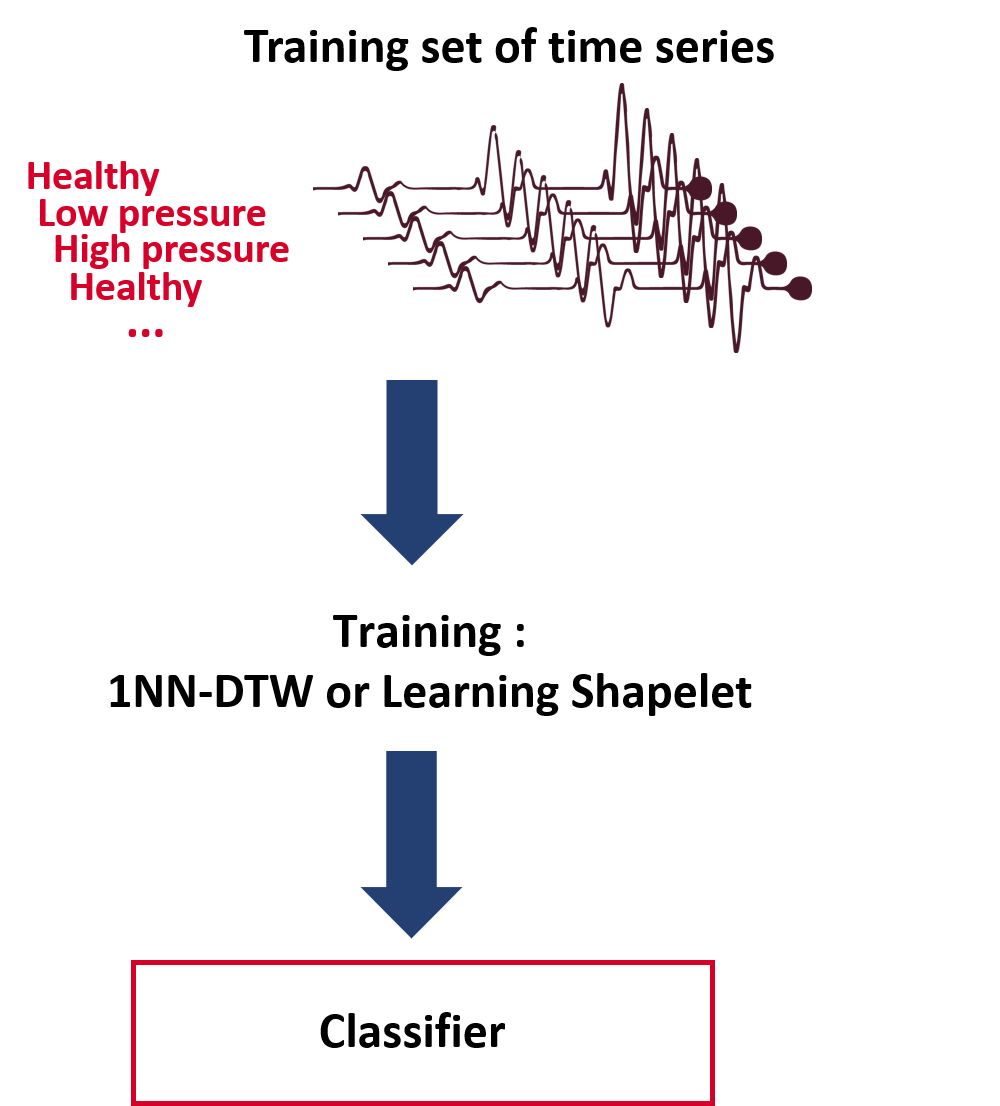

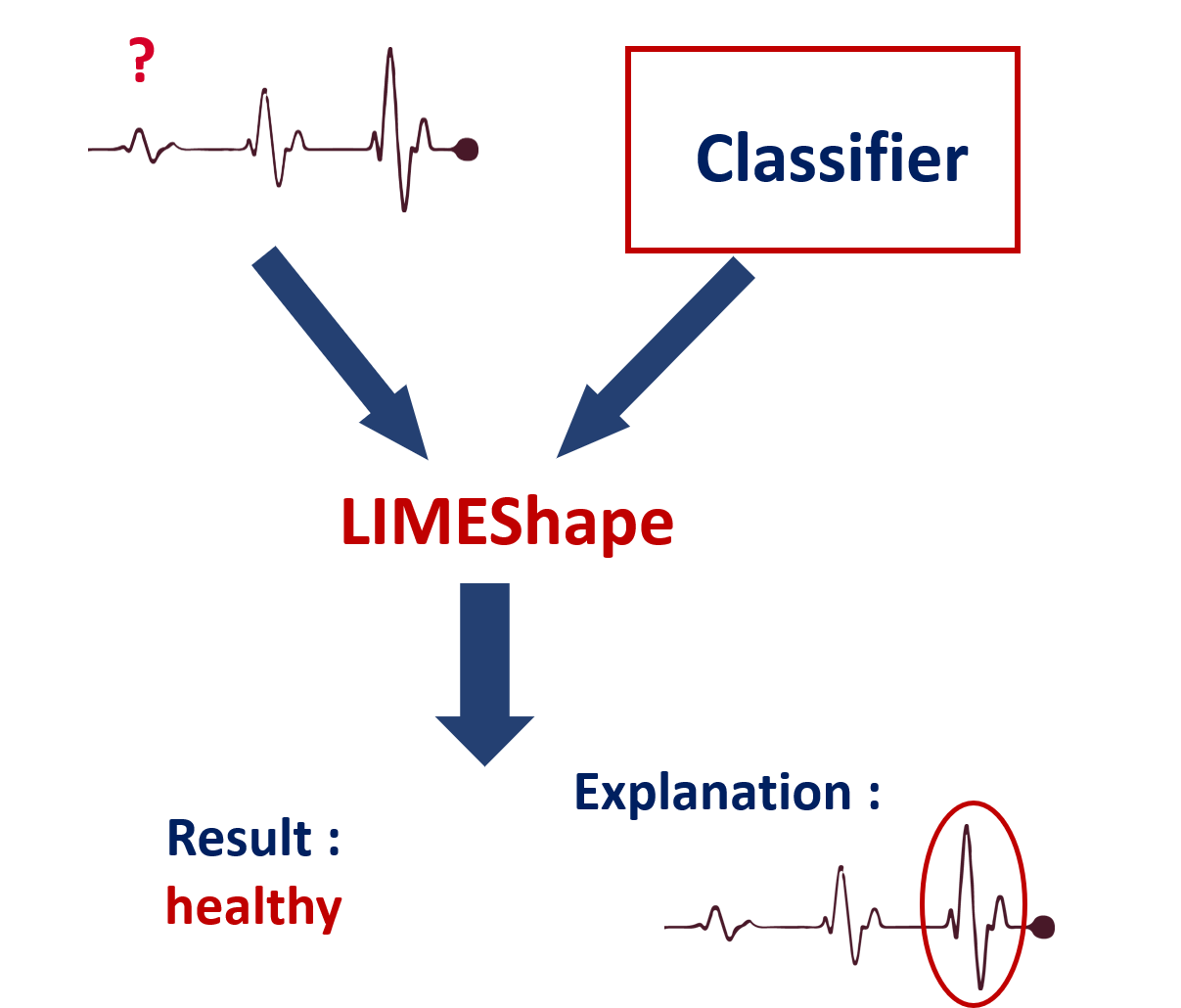

Once a classifier has been trained and saved, it can be used in order to classify a new time series.

A user can import a saved classifier and the time series (s)he wants to classify.

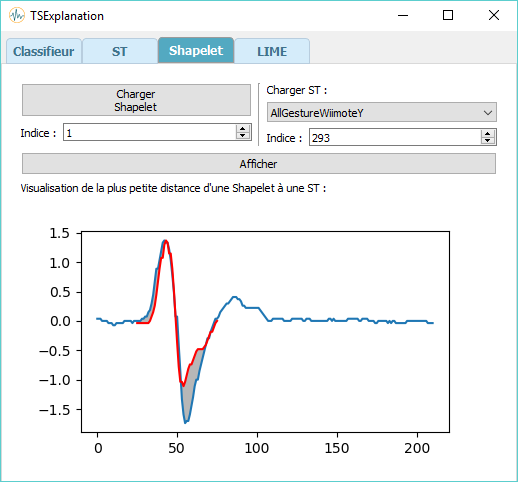

TSExplanation will then use the imported classifier to classify the time series. After doing so, it will display the choice of classification and the explanation of this choice.

This explanation is generated by an adjustment of the algorithm LIME : LIMEShape, an algorithm dedicated to the time series processing..

Such an explanation is displayed in the form of a graph. The time series parts which contributed to the classification the most will be colored in green as the ones which contributed the least will be colored in red.

Therefore, a user can see the different parts of the time series which allowed the tool to make a decision.

As a consequence, a user can judge by (her)himself the work that TSExplanation has made and therefore trust his(her) tool.