Who for?

The functional reeducation center at Kerpape welcomes disabled people, either from mental or physical disorders. In this context, they have two Launchpads that are fully-equipped with automated furniture and commands. They allow disabled people to discover and learn how to use domotics.

Why?

Unfortunately these flats are very expensive and Kerpape only owns two. As a mean to help disabled people learn how to use them, Willy Allègre and Jean-Paul Despartes have asked us to build a software to fix this problem. Their idea is to allow people to learn how to use the flats without being physically inside them, through virtual reality. Then, when they get to use the real flats, they already know how to use the different equipements and lose less time which allow Kerpape to rent these for shorter durations, and let more people have a go.

How?

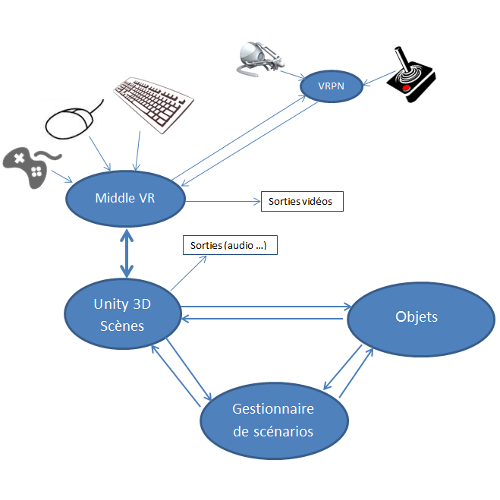

For this project, we are using Unity3D, a game engine that allows us to generate realistic scenes on the fly. A variety of plugins are attached to it, including MiddleVR, which adapts Unity to Virtual Reality environments : it handles stereoscopic displays, supports a wide range of input peripherals, allow on the fly configuration of inputs and outputs... It also allows us to display interactive menus, that can be used even without a mouse in an immersive room!